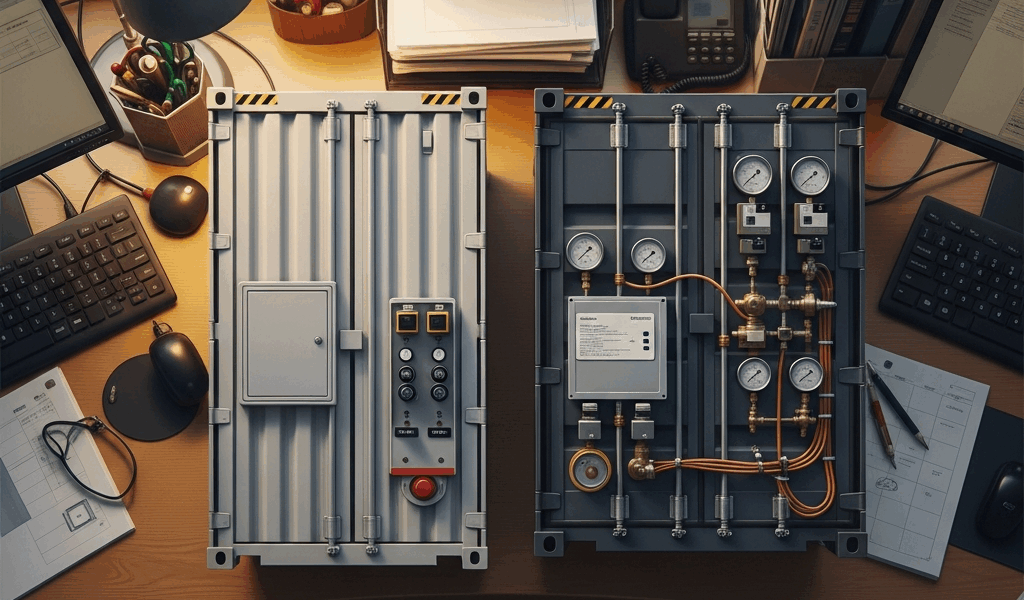

AWS ECS vs EKS for Small Teams — Which One to Pick

The Short Answer If You Just Need to Ship

AWS ECS vs EKS has gotten complicated with all the hot takes and vendor comparisons flying around. So let me cut through it: if you’re a small team and you need to pick one today, pick ECS. That’s it. The rest of this is just the evidence — and an honest look at the specific situations where you should flip that call.

EKS makes sense when at least one of these is true: someone on your team already runs Kubernetes in production and genuinely enjoys it, you have contractual or architectural reasons to stay portable across clouds, or you’re juggling more than a dozen microservices with complex traffic routing. None of those apply? ECS will get you further, faster, with less pain. Full stop.

I’ve watched small engineering teams — two, four, six people — burn their first month on a new product configuring kubectl contexts, debugging node group scaling policies, and reading Kubernetes upgrade changelogs. Not one hour of that moved the product an inch. That’s the real cost nobody’s feature comparison table ever captures.

What ECS Actually Gets Right for Small Teams

Burned by an over-engineered Kubernetes setup on a previous project, I started defaulting to ECS for greenfield work. Honestly? I was surprised by how far it goes before you hit a wall.

Fargate Removes an Entire Category of Work

The biggest win is Fargate. You define a task, specify CPU and memory — say, 0.5 vCPU and 1GB RAM — and AWS runs it. No EC2 instances. No node groups. No patching AMIs at 11pm because a CVE dropped on a Friday. The infrastructure just disappears from your to-do list.

For a small team, this is enormous. You’re not hiring a dedicated DevOps engineer. You’re a backend developer who also owns deployments. Fargate makes that survivable — at least if you don’t want infrastructure management eating your entire week.

IAM Integration That Actually Feels Native

ECS task roles map directly to IAM. Create a role, attach it to a task definition, and your container gets scoped AWS credentials automatically. It works exactly the way you’d expect AWS things to work if you’ve spent any real time in the console. EKS has IAM Roles for Service Accounts — IRSA — which does the same job. But the configuration involves OIDC provider setup, Kubernetes annotations, and several steps that feel like assembling furniture with instructions written for a completely different model. Don’t make my mistake of assuming it’s equivalent effort. It isn’t.

The Learning Curve Is Honest

ECS has a smaller surface area. Task definitions, services, clusters, a load balancer. That’s most of it. A developer who has never touched containers can be deploying to ECS within a day — sometimes less. The AWS console actually helps here rather than obscuring things, which, if you’ve used some AWS consoles, you know isn’t guaranteed.

Where ECS Hits Its Ceiling

ECS does have real limits. The ecosystem is AWS-specific — no Helm charts, no CNCF tooling, no service mesh story that doesn’t involve App Mesh. App Mesh is fine, but it’s not battle-tested the way Istio or Linkerd are. If you need advanced traffic splitting, circuit breaking at the infrastructure layer, or cross-cluster federation, you’ll be working against the grain. That ceiling is high enough that most small teams never reach it. But it exists, and you should know it’s there.

Where EKS Makes Sense and What It Costs You

Probably should have opened with this section, honestly, because the cost piece changes the conversation immediately for budget-conscious teams.

The Real Price of Running EKS

EKS charges $0.10 per hour for the managed control plane. That’s $72 per month before a single workload runs. Add two t3.medium nodes at roughly $0.0416/hr each and you’re around $132/month for a minimal cluster — one you’d be nervous running real production traffic on. A more realistic small-team production setup, three m5.large nodes plus storage and data transfer, lands between $250 and $400/month before your actual application costs. ECS with Fargate, by contrast, scales to near-zero when nothing is running. That gap matters when you’re pre-revenue or watching every dollar.

The Hidden Cost — Operator Time

The dollar figure isn’t even the main issue. Kubernetes upgrades are. EKS drops support for older Kubernetes versions on a fixed schedule. When that deadline hits, you need to upgrade the control plane, validate node groups, check for deprecated API versions in your manifests, and test everything. On a small team that’s a full engineer-day — sometimes two — every nine to twelve months. On ECS, AWS handles the control plane entirely. You don’t do cluster upgrades. That time stays yours.

When EKS Earns Its Cost

EKS is genuinely the right answer when you need the Kubernetes ecosystem. Helm charts for cert-manager, external-dns, and Argo CD are mature and widely supported. Running twelve or more services with complicated inter-service dependencies? Kubernetes primitives — deployments, replica sets, horizontal pod autoscalers — give you more expressive control than ECS task definitions ever will. The tooling compounds over time. The investment pays off. Just not usually at the four-engineer stage. That’s the part people keep skipping over.

The Real Deciding Factors — Not Just Features

Here are the actual scenarios that should tip your decision. Not a feature checklist — concrete triggers.

You Have Multi-Cloud Ambitions

If your roadmap includes running workloads on GCP or Azure within the next eighteen months — compliance reasons, a customer requirement, a CTO keeping optionality open — EKS wins. ECS is AWS-only and always will be. Kubernetes runs on every major cloud and on-prem. The portability is real and worth the overhead, but only if the requirement is real. If it’s hypothetical, it’s not worth the overhead. Hypothetical portability has burned a lot of small teams.

Someone on Your Team Already Knows Kubernetes

This one is simple. I’m apparently the person on my team who gets handed whatever infrastructure decision nobody else wants, and I’ve learned this the hard way: if you have a senior engineer who has run Kubernetes in production, maintained cluster upgrades, and debugged pod scheduling failures at 2am — the calculus changes completely. Their existing knowledge eliminates most of the hidden costs that make EKS painful. Forcing that person onto ECS means they spend time learning a proprietary abstraction instead of applying skills they already have. That’s a waste of a good engineer.

You Need a Service Mesh

mTLS between services. Traffic mirroring. Fine-grained observability at the network layer. If you’re at that point, you need a service mesh — at least if you want to do it properly. Istio and Linkerd both run on Kubernetes. AWS App Mesh runs on ECS, but the documentation is thinner, the community is smaller, and the operational patterns are less established. At this level of networking complexity, EKS with Istio is the more supported path. It’s not really a close call.

You’re Running Many Microservices

There’s a rough inflection point around ten to fifteen distinct services where ECS service management starts feeling repetitive and Kubernetes abstractions start earning their complexity. Helm templates, namespace-level RBAC, cluster-wide policies — they start paying dividends when you’re deploying that many moving parts. Below that threshold, ECS with a few well-named services in a single cluster is faster to reason about. Faster to debug at midnight, too.

How to Start Small and Migrate Later If You Need To

The fear I hear most often from small teams is: what if we pick ECS and then outgrow it? The implicit worry is that switching to EKS later will be catastrophic. It won’t be.

Your application code doesn’t care whether it runs on ECS or EKS. It’s a Docker container either way. The migration work lives entirely in the infrastructure layer — rewriting task definitions as Kubernetes manifests, spinning up an EKS cluster, migrating CI/CD pipelines to push to the new cluster, updating service discovery if you’ve been using AWS Cloud Map. Real work. Scoped work. A team that’s grown to fifteen engineers with some DevOps capacity can execute that migration in two to four weeks without touching a single line of application code.

The tools that make this easier exist today. Kompose translates Docker Compose files into Kubernetes manifests as a starting point. If you’ve kept your ECS task definitions parameterized and your container images environment-agnostic — and you should — the migration is mostly infrastructure YAML and DNS changes. Tedious, not catastrophic.

Start with what lets you ship. ECS is that thing for most small teams. Build the product, grow the team, revisit the infrastructure decision when you have the headcount and complexity that makes EKS’s overhead worthwhile. The engineers arguing for EKS at the four-person stage are usually optimizing for the company they want to be in three years, not the one they actually are today. Ship first. Migrate when the pain is real, not hypothetical.

Stay in the loop

Get the latest team aws updates delivered to your inbox.