AWS Integration Patterns: SQS, SNS and EventBridge

Integration patterns have gotten complicated with all the jargon and architecture diagrams flying around. As someone who’s spent years wiring up distributed systems on AWS, I learned everything there is to know about connecting services without losing my mind. Today, I will share it all with you.

Look, if you’ve ever stared at a whiteboard full of arrows pointing between boxes and wondered “how do I actually build this?” — you’re not alone. I’ve been there more times than I can count. The truth is, picking the right integration pattern can save you months of headaches down the road. Pick the wrong one, and you’ll be refactoring at 2 AM on a Saturday.

Point-to-Point Integration

Point-to-Point (P2P) integration is the simplest thing in the world — you connect System A directly to System B. Done. Ship it. It works great when you’ve got two services that need to talk. I used this approach on my first serious project, and honestly, it was fine… until we had twelve services all needing to talk to each other. That’s when things got ugly.

The problem with P2P is math. Two systems? One connection. Three? Three connections. Ten systems? You’re looking at 45 connections. Every new system you add makes the web denser. I’ve seen production environments where nobody could draw the dependency graph anymore because it looked like a plate of spaghetti.

Hub-and-Spoke

Hub-and-spoke fixes the spaghetti problem by putting a central hub in the middle. Every system connects to the hub, and the hub routes messages where they need to go. Think of it like an airport — flights don’t go directly between every city, they route through hubs like Atlanta or Chicago.

I’ve used this pattern with Amazon SQS as the central routing point, and it cleaned up our architecture dramatically. The downside? That hub becomes pretty important. If it goes down, everything stops. So you’d better make sure you’ve got redundancy built in. AWS services handle this well since they’re managed, but it’s still something to think about.

Enterprise Service Bus (ESB)

Probably should have led with this section, honestly. ESB takes the hub-and-spoke idea and supercharges it. Your central bus doesn’t just route messages — it transforms data, converts protocols, handles routing logic, and more. It’s like the Swiss Army knife of integration.

I’ll be real though: ESBs can become massive, unwieldy beasts if you’re not careful. I worked at a company where the ESB turned into a god service that knew about every business rule in the entire organization. That’s not what you want. Use it for what it’s good at — routing and transformation — and keep the business logic in your services.

Microservices Integration

This is where most teams are heading these days, and for good reason. Break your application into small, independent services that each do one thing well. They talk to each other over lightweight protocols like HTTP/REST or through message queues like SQS and SNS.

The beauty of microservices is that teams can work independently. Your payments team can deploy their service without coordinating with the inventory team. The catch is that you’re trading monolith complexity for distributed systems complexity. And distributed systems are hard. Really hard. You need to think about network failures, data consistency, service discovery — the list goes on.

Service-Oriented Architecture (SOA)

SOA was the precursor to microservices, and honestly, it gets a bad rap these days. The core idea is sound: organize your application as a collection of reusable services that communicate over a network. Each service handles a specific business function.

Where SOA went wrong for a lot of organizations was the tooling. SOAP, WSDL, XML everywhere — it was heavy. But the architectural principles? Still solid. Many AWS architectures today are essentially SOA done right, just with lighter-weight protocols and better tooling.

File Transfer

Sometimes the simplest approach is the right one. File transfer means exactly what it sounds like — you dump data into a file and another system picks it up. FTP, SFTP, S3 bucket drops. I’ve used this pattern with Amazon S3 more times than I can count, especially for batch processing scenarios.

It’s not glamorous, but it’s reliable. Got a nightly data feed from a partner? Drop it in an S3 bucket, trigger a Lambda function, process the data. Simple. Just don’t try to use it for real-time scenarios — that’s not what it’s built for.

Shared Database

Multiple systems reading and writing to the same database. It works, but I’ve got scars from this approach. The moment two teams need to modify the same table schema, you’re in for a world of coordination pain. And good luck deploying independently when everyone’s coupled to the same data model.

That said, for smaller teams or early-stage projects, a shared database can be pragmatic. Just know that you’ll probably need to break it apart eventually as your system grows. I usually recommend starting here only if you have fewer than three services touching the database.

Remote Procedure Calls (RPC)

RPC lets you call a function on a remote server like it’s running locally. gRPC has made this pattern popular again, especially for internal service-to-service communication where you need low latency and strong typing. I’ve used gRPC between microservices on ECS, and the performance is excellent.

The gotcha with RPC is that it makes remote calls feel local, which can trick you into forgetting about network failures. Always build in retries, timeouts, and circuit breakers. Your remote call will fail eventually — it’s just a matter of when.

Message-Oriented Middleware (MOM)

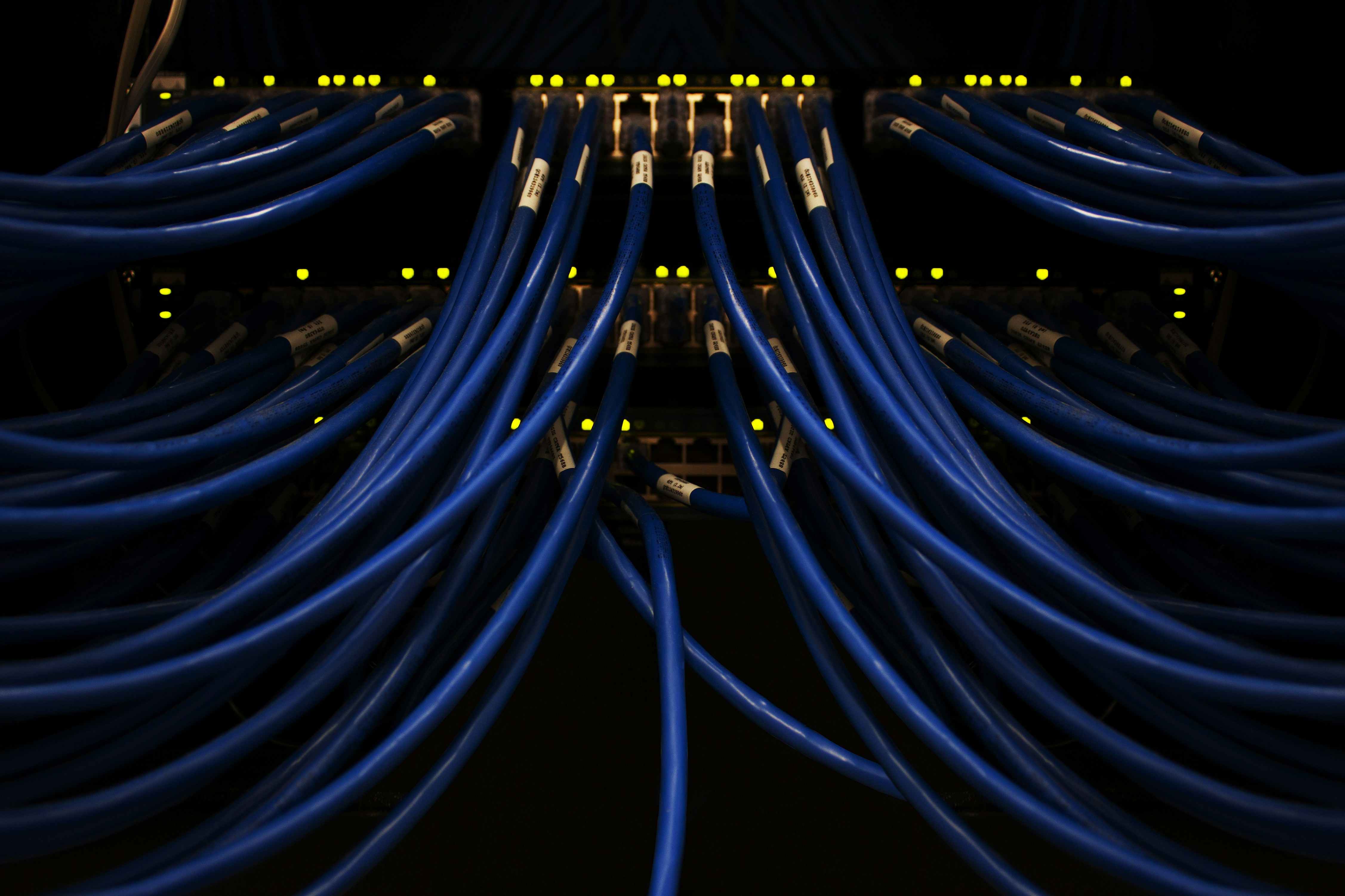

This is where things get really interesting, and it’s where AWS shines. Message-oriented middleware — think Amazon SQS, SNS, and Apache Kafka on Amazon MSK — lets your systems communicate by passing messages through queues or topics. The sender drops a message and moves on. The receiver picks it up when it’s ready.

I’m a huge fan of this pattern. It decouples your systems beautifully. Service A doesn’t need to know that Service B exists. It just publishes a message saying “hey, an order was placed.” Whoever cares about that event can subscribe. That’s what makes message-based integration endearing to us architecture nerds.

Data Streaming

Data streaming is message-oriented middleware’s faster, cooler cousin. Technologies like Amazon Kinesis and Apache Kafka handle continuous data flows in real time. I’ve built streaming pipelines for analytics dashboards that process thousands of events per second, and it’s genuinely impressive how well these tools handle the load.

If you’re building anything that needs live data — real-time analytics, IoT sensor processing, fraud detection — streaming is your friend. Just be prepared for a steeper learning curve compared to simple queue-based approaches.

API Integration

APIs are the bread and butter of modern integration. You expose an endpoint, someone calls it, you send back data. Amazon API Gateway makes this incredibly easy on AWS, handling authentication, throttling, and monitoring out of the box.

RESTful APIs are still the most common, though GraphQL is gaining ground for scenarios where clients need flexible data queries. I’ve built dozens of API integrations using API Gateway backed by Lambda functions, and the combination is hard to beat for simplicity and cost-effectiveness.

Event-Driven Integration

Event-driven architecture is where I spend most of my time these days. Systems publish events when something interesting happens, and other systems react to those events. AWS EventBridge is the go-to service here, and it’s fantastic. You define rules that match events, and EventBridge routes them to the right targets.

What I love about event-driven patterns is how naturally they model real business processes. A customer places an order? That’s an event. Inventory gets reserved, payment gets processed, a shipping label gets created — all triggered by that one event. No tight coupling between any of those services.

Orchestration vs. Choreography

These two patterns represent different philosophies for coordinating work across services.

Orchestration uses a central coordinator — like AWS Step Functions — that tells each service what to do and when. It’s great for complex workflows where you need visibility into the overall process. I use Step Functions for anything with more than three steps, especially when I need error handling and retries at each stage.

Choreography takes the opposite approach. There’s no central controller. Each service knows what to do based on the events it receives. It’s more autonomous and scales well, but it can be harder to debug when something goes wrong because there’s no single place to look at the whole picture.

Which Pattern Should You Pick?

- Point-to-Point: Good for simple, two-system integrations. Don’t use it if you’re planning to grow.

- Hub-and-Spoke: Great when you need centralized control but watch out for single points of failure.

- ESB: Powerful for complex enterprises, but keep it focused on routing — not business logic.

- Microservices: Best for teams that want to deploy independently. Be ready for distributed systems challenges.

- SOA: The principles are solid. Use modern tooling and you’ll be fine.

- File Transfer: Perfect for batch processing. S3 plus Lambda is a winning combo.

- Shared Database: Pragmatic for small teams. Plan your escape route.

- RPC: Low latency, strong typing. Always plan for failure.

- MOM: My personal favorite. SQS and SNS make async communication easy.

- Data Streaming: Real-time use cases. Kinesis or MSK depending on your needs.

- API Integration: The default choice for most external integrations.

- Event-Driven: EventBridge is a game changer. Use it for loosely coupled architectures.

- Orchestration: Step Functions for complex, multi-step workflows.

- Choreography: Distributed control that works well with microservices.

Here’s the thing I’ve learned after years of building integrations: there’s no single “best” pattern. The right choice depends on your team, your scale, and your specific use case. Most real-world architectures combine several patterns. You might use API Gateway for external traffic, EventBridge for internal event routing, and SQS for async job processing — all in the same application.

Start simple. Add complexity only when you need it. And whatever you do, document your integration architecture. Future you will thank present you.

Stay in the loop

Get the latest wildlife research and conservation news delivered to your inbox.