Linux System Logs on AWS: CloudWatch and Log Analysis

Linux system logs have gotten complicated with all the log formats, rotation policies, and monitoring tools flying around. As someone who’s spent years troubleshooting production Linux servers at 2 AM, I learned everything there is to know about finding the answer buried in log files. Today, I will share it all with you.

There’s a saying among sysadmins: “The answer is always in the logs.” It’s true about 95% of the time. The other 5%, the logs would have told you the answer if you’d configured logging properly. I can’t tell you how many times I’ve solved a mystery that had everyone stumped just by reading the right log file with the right filter.

Types of Linux System Logs

Linux organizes its logs into several categories, and knowing where to look is half the battle:

- Authentication Logs: Found at

/var/log/auth.log(Debian/Ubuntu) or/var/log/secure(RHEL/Amazon Linux). Every login attempt — successful or failed — ends up here. This is the first place I check when investigating potential security incidents. Failed SSH login attempts from random IP addresses? You’ll see them here. - System Logs: Located at

/var/log/syslogor/var/log/messages. This is the catch-all for general system events. Service start/stop messages, cron job output, kernel messages — it all lands here if there’s no more specific log file. - Kernel Logs:

/var/log/kern.loghas detailed kernel-level messages. Hardware issues, driver problems, and out-of-memory kills show up here. When an EC2 instance starts behaving strangely, the kernel log is one of my first stops. - Application Logs: These vary by application. Nginx logs live in

/var/log/nginx/, Apache in/var/log/httpd/or/var/log/apache2/. Most applications let you configure log locations and verbosity levels. - Boot Logs:

/var/log/boot.logcaptures everything that happens during startup. When an instance fails to boot properly, this log tells you why.

Viewing and Analyzing Logs

Probably should have led with this section, honestly, because all the log knowledge in the world doesn’t help if you can’t efficiently read the files. Here are the commands I use daily:

tail -f /var/log/syslog is the real-time log viewer. It shows new log entries as they’re written, which is invaluable when you’re reproducing an issue and want to see what happens live. I keep this running in a terminal whenever I’m debugging.

journalctl is the modern way to query logs on systemd-based systems (which includes Amazon Linux 2023). It’s more powerful than reading raw log files because you can filter by service, time range, severity, and more. journalctl -u nginx --since "1 hour ago" shows you just Nginx log entries from the last hour. Game changer for focused troubleshooting.

grep and awk are your friends for parsing large log files. Need to find all 500 errors in your web server log? grep " 500 " /var/log/nginx/access.log gets you there fast. For more complex analysis, I pipe log output through awk to extract and aggregate specific fields.

Understanding Logrotate

Log files grow continuously, and without management, they’ll fill up your disk. Logrotate handles this automatically on most Linux systems. It rotates (compresses and archives) old log files and creates fresh ones on a schedule you define.

The default configuration in /etc/logrotate.conf usually rotates logs weekly and keeps four weeks of history. I customize this per-application based on how verbose the logging is and how much disk space I have. For high-traffic web servers, I rotate daily and keep 30 days of compressed logs. For quieter services, weekly rotation is fine.

On AWS EC2 instances, I always make sure logrotate is configured properly before going to production. I once had an instance run out of disk space because an application was writing debug-level logs to a file that nobody rotated. The application crashed, and the root cause was embarrassingly simple.

Centralized Logging With CloudWatch

Looking at logs on individual instances doesn’t scale. When you’re running 20 EC2 instances behind a load balancer, SSH-ing into each one to grep through logs is painful. That’s where CloudWatch Logs comes in.

The CloudWatch agent runs on your instances and streams log files to CloudWatch in near real-time. You configure which log files to collect, and they appear in log groups in the CloudWatch console. From there, you can search across all instances at once, set up metric filters to trigger alarms, and export logs to S3 for long-term storage.

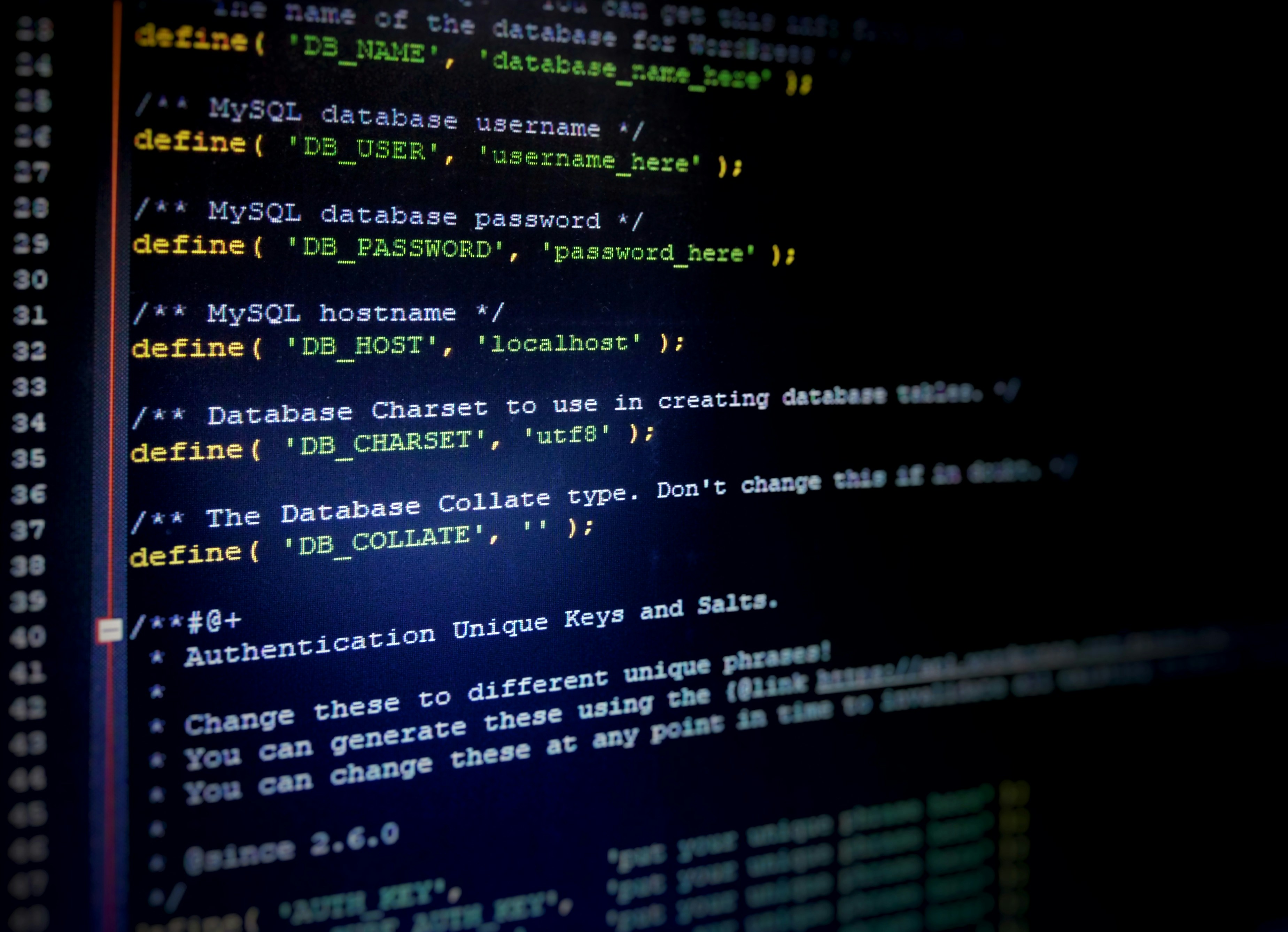

Setting up the CloudWatch agent is straightforward:

- Install the CloudWatch agent on your instance (it’s in the Amazon Linux repos)

- Create a configuration file specifying which log files to collect

- Attach an IAM role to your instance that allows CloudWatch Logs access

- Start the agent

That’s what makes CloudWatch endearing to us Linux admins on AWS — it takes the pain out of centralized logging without requiring you to run your own log infrastructure.

CloudWatch Logs Insights

CloudWatch Logs Insights is a query language for searching and analyzing your logs. It’s like SQL for log data, and it’s incredibly powerful. Want to find the top 10 most frequent error messages across all your instances in the last hour? That’s a one-line query in Logs Insights.

I use Logs Insights for most of my log analysis these days. It’s faster than grep for large volumes, works across multiple log groups, and can produce visualizations directly in the console. The query syntax takes a little getting used to, but once you learn the basics, you’ll wonder how you lived without it.

Syslog and Rsyslog

Under the hood, most Linux logging goes through syslog (or its more capable successor, rsyslog). Rsyslog handles message routing — it decides which messages go to which log files based on facility (where the message came from) and severity (how important it is).

Understanding severity levels helps you tune your logging:

- emerg (0): System is unusable. You should never see this in normal operation.

- alert (1): Action must be taken immediately.

- crit (2): Critical conditions. Hardware failures, for example.

- err (3): Error conditions. Application errors land here.

- warning (4): Warning conditions. Something might be wrong.

- notice (5): Normal but significant. Service restarts, for instance.

- info (6): Informational messages. Normal operational messages.

- debug (7): Debug-level messages. Extremely verbose. Never leave this on in production.

Log Security

Logs contain sensitive information — IP addresses, usernames, sometimes even request bodies with personal data. Protecting log files and access to centralized logging systems is important. Here’s what I do:

- Set restrictive file permissions on log files (640 or 600)

- Use IAM policies to control who can access CloudWatch Logs

- Encrypt logs at rest in S3 if they contain sensitive data

- Implement log retention policies — don’t keep logs longer than necessary

- Be careful about logging request/response bodies that might contain PII

Troubleshooting With Logs: My Process

After years of troubleshooting, I’ve developed a systematic approach:

- Define the problem. “The application is slow” is different from “The application returns 500 errors for /api/users.” Be specific.

- Narrow the time range. When did the problem start? Logs from the right time window are 100x more useful than logs from the whole day.

- Start broad, then narrow. Check system logs for obvious issues (disk full, OOM kills), then move to application logs for specifics.

- Correlate across sources. An application error might be caused by a database timeout, which might be caused by a network issue. Cross-referencing logs from multiple sources reveals the chain of events.

- Look for patterns. Is the error happening on all instances or just one? Is it constant or periodic? Patterns point you toward the root cause.

Advanced Logging: ELK Stack and Beyond

For teams that need more than CloudWatch, the ELK stack (Elasticsearch, Logstash, Kibana) or its AWS equivalent (Amazon OpenSearch Service) provides powerful log analytics. You get full-text search, complex aggregations, and beautiful dashboards. The trade-off is more infrastructure to manage, but for organizations processing millions of log entries per day, the investment pays off.

Whatever logging approach you choose, the principles are the same: collect everything, centralize it, make it searchable, and set up alerts for the patterns that matter. Your future self, the one troubleshooting a production incident, will be grateful you put in the work upfront.

Stay in the loop

Get the latest wildlife research and conservation news delivered to your inbox.