Why Cold Starts Happen in the First Place

Lambda cold starts have gotten complicated with all the conflicting advice flying around. I’ve watched API response times climb from 50ms to 800ms during off-peak hours — same culprit every time. Lambda spun down my function and needed to spin it back up.

Here’s what’s actually going on under the hood. AWS builds a fresh execution environment from scratch when you invoke a function that hasn’t run recently. Three phases, every time. The runtime boots first — that’s your Node.js interpreter, Python runtime, or Java Virtual Machine doing its thing. Then your handler code loads into memory. Then any initialization code you wrote outside the handler runs. All of it takes time. You pay that latency tax on each cold start, no exceptions.

Runtime choice and packaging drive most of the variance. Node.js typically adds 30–100ms. Python sits somewhere in the 20–80ms range. Java? I’ve personally watched Java cold starts hit 600ms or higher without SnapStart — the JVM startup problem is brutal for synchronous APIs. Go is fast. Rust is fast. Running Java in 2024 without provisioned concurrency or SnapStart enabled is essentially choosing slowness on purpose.

Deployment package size matters more than most people realize. A 50MB bundle takes longer to extract and prepare than a 5MB bundle — obviously. VPC attachment tacks on another 40–150ms because Lambda has to set up network interfaces. I once inherited a function attached to a VPC for no reason anybody could remember. Removing that attachment cut cold start time by 90ms flat. Worth auditing every function you own.

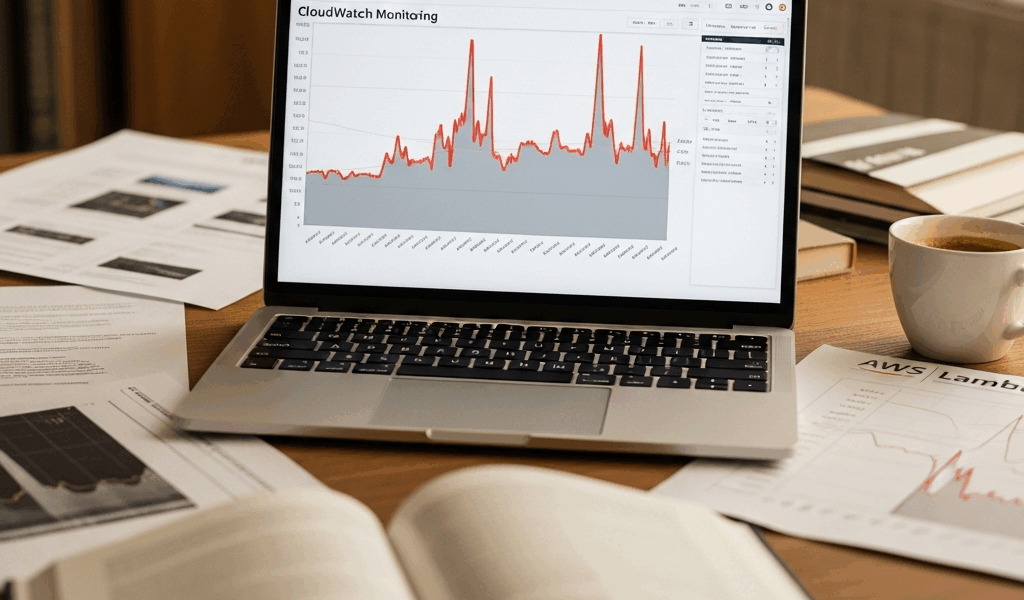

How to Measure Cold Starts in CloudWatch

Measure before you fix anything. Don’t guess.

Open CloudWatch Logs Insights and run this query against your Lambda log group:

fields @duration, @initDuration

| filter @initDuration > 0

| stats pct(@initDuration, 50) as p50, pct(@initDuration, 90) as p90, pct(@initDuration, 99) as p99, max(@initDuration) as max by bin(5m)That filter targets invocations where @initDuration is present — that field only shows up during actual cold starts, so you’re not polluting your results with warm invocation noise. You’ll see initialization duration at different percentiles across time windows. The p90 is usually what matters for real customer experience. p90 cold start at 400ms against a 200ms SLA means you have a problem that provisioned concurrency might actually solve.

Run it across a full day or a full week. Catch the traffic patterns. A function getting 100 invocations clustered at 9am cold starts far more often than one with steady traffic throughout the day. The query output gives you both the timing and the severity — two things you need before making any changes.

Lambda Power Tuning is worth knowing about too. Open-source framework, runs your function across different memory configurations, plots cold start latency against cost. I use it before committing to any fix — especially for new functions where I don’t have baseline intuition yet. Takes about an hour to set up properly. Answers the “should we even worry about this?” question in a way that’s actually rigorous rather than gut-feel.

Fixes Ranked by Impact and Cost

Not all fixes are equal. Some save 50ms and cost nothing. Others save 300ms but add $1,000 to your monthly bill. Here’s the ranked list based on latency reduction and real-world bang for buck.

1. Reduce Deployment Package Size (50–150ms savings, free)

Dead weight is the easiest thing to cut. I once found a complete AWS SDK bundled into a Lambda function that only ever touched the S3 client. The fix took maybe 20 minutes: esbuild to tree-shake unused code, or just import only the specific client you actually need.

Lambda layers are the cleaner long-term approach. Put your dependencies in a layer rather than inside your function zip. Layers cache separately — updating your function code doesn’t force a new layer download every deployment.

2. Remove VPC Attachment Unless You Actually Need It (40–150ms savings, free)

This is my biggest regret across production Lambda code. I attached functions to VPCs because “security” sounded like a good reason — not because the architecture actually required it. Lambda has better options now: VPC endpoints for AWS services, and if you genuinely need access to on-premises resources, a bastion host or AWS PrivateLink handles it without the cold start penalty.

If VPC attachment is truly unavoidable, use fewer subnets and verify you have enough spare IP addresses. Lambda needs to allocate an ENI. Constraints there force cold starts you can’t optimize away.

3. Switch Runtime or Enable SnapStart (100–600ms savings, free or low cost)

Node.js or Python for latency-sensitive work. Go for maximum speed if a rewrite is on the table. Stuck on Java? Enable SnapStart — it snapshots the JVM after initialization and restores from that snapshot instead of booting fresh. Latency drops by roughly 10x. Storage costs run about $0.015 per GB-hour, which is negligible for most workloads.

4. Provisioned Concurrency (Eliminates cold starts, $20–100+ per month)

Provisioned concurrency pre-warms execution environments. You tell AWS you want 10 warm instances of a function always ready — it keeps them alive. Cold starts hit zero for the first 10 simultaneous invocations. The 11th and beyond still cold start unless you provision more.

The cost math: 10 provisioned instances at 128MB each runs roughly $0.015 per instance-hour in US East. That’s about $10 per month per instance. Ten instances running 24/7 — budget around $100 a month. Only justifiable when your SLA can’t absorb 300ms latency spikes, or your traffic is steady enough that provisioned instances actually stay busy.

When Provisioned Concurrency Actually Makes Sense

Provisioned concurrency is not free insurance. It’s a deliberate trade with real breakeven math attached.

It makes sense when your function receives at least 50+ requests per minute consistently, your SLA demands response times under 200ms, and you’re seeing cold starts violating that SLA more than once daily. Real example from production: an internal API handling 5,000 requests per minute from downstream microservices. Cold starts there cascade through the entire request chain. Provisioned concurrency is clearly justified — the math works out immediately.

It doesn’t make sense for batch jobs, scheduled tasks where users never notice a 500ms delay, background processing with loose latency tolerance, or APIs that spike hard and then go quiet. A function that sees 1,000 requests at 9am and then silence — provisioned concurrency is burning money for eight hours straight every single day.

Calculate your own breakeven before committing. If each cold start costs $0.10 in customer friction or retry overhead, and you see 100 cold starts per month, that’s $10 in hidden cost. Provisioned concurrency at $100 a month doesn’t pay off there. But at 10,000 cold starts per month, suddenly provisioned concurrency saves both money and sanity simultaneously.

Also worth knowing: provisioned concurrency auto-scales now. Reserved concurrency — which still allows cold starts — costs less and scales with traffic automatically. If your traffic spikes unpredictably, reserved concurrency with intelligent tiering might outperform pure provisioned in both cost and flexibility.

What to Stop Doing That Makes Cold Starts Worse

Probably should have opened with this section, honestly. Prevention moves faster than remediation every time.

Stop Importing Entire SDKs

I’m apparently the kind of person who audits other people’s Lambda functions, and the pattern I see constantly is importing all of boto3 to make exactly one S3 call. The entire SDK loads on cold start. Use lightweight alternatives — aws-sdk v3 for JavaScript ships with modular imports built in. For Python, httpx with raw API calls works if you’re willing to go that route. The latency difference is measurable, not theoretical.

Stop Initializing Connections Inside the Handler

Database connections, HTTP clients, Redis clients — all of it belongs outside the handler function. Outside means the connection persists across warm invocations. Inside means every cold start rebuilds it from zero. Don’t make my mistake.

// Bad

exports.handler = async (event) => {

const db = await mysql.createConnection(...);

// query

};

// Good

const db = await mysql.createConnection(...);

exports.handler = async (event) => {

// query using existing db

};Stop Using VPC by Default

This deserves its own section because it’s genuinely endemic across teams. VPC attachment adds latency — that’s not negotiable. Only attach if you’re accessing resources that actually live inside a VPC. Public AWS services do not need it.

Stop Ignoring SnapStart for Java

Java cold starts without SnapStart are painful in a way that’s hard to justify in 2024. SnapStart isn’t free, but the latency gains justify the cost for almost every Java Lambda running synchronous workloads. Enable it. Run CloudWatch Insights afterward — measure the actual improvement rather than assuming it worked.

The path forward is straightforward: measure first, then fix targeted problems. Run the CloudWatch query. Calculate your breakeven numbers. Pick fixes in priority order. Most teams cut cold start latency by 60–80 percent with zero additional spend — just code changes and some architectural cleanup. If you need zero-cold-start guarantees, provisioned concurrency is the hammer. But measure first. Fix second. Pay third.

Stay in the loop

Get the latest team aws updates delivered to your inbox.