EC2 Instance Types Have Gotten Complicated With All the Options Flying Around

AWS offers roughly 750 different EC2 configurations across 30-plus instance families. That number exists to serve everyone from single-founder startups to Netflix. But most small teams land on AWS without a dedicated ops person, squint at the instance type selector, and pick something that feels reasonable. Three months later? You’re either hemorrhaging money on oversized instances or scrambling because your app crawled to a halt at 2 a.m.

As someone who’s watched teams torch thousands of dollars on the wrong instance type, I learned everything there is to know about matching workloads to EC2 families. Today, I will share it all with you.

EC2 instance types aren’t arbitrary. They cluster around three core dimensions — compute power (vCPUs), memory (RAM), and network performance. Every instance family is optimized for a different workload pattern. Your job isn’t memorizing specs. It’s identifying which pattern matches your workload, then picking the smallest instance in that family that actually handles your traffic.

So, without further ado, let’s dive in.

The Four Instance Families Most Teams Actually Use

t3 — Burstable Compute for Intermittent Workloads

But what is t3? In essence, it’s an instance family built for workloads that idle most of the time but need to spike. But it’s much more than that.

It works by accumulating CPU credits during quiet periods, then spending them during traffic spikes. Development environments, internal dashboards, marketing sites that only see traffic during business hours — these are the sweet spots.

I learned this one the hard way. We ran a client reporting tool on a t3.medium. Most days it sat at 5 percent CPU utilization. Two days a month, the C-suite ran 50 reports simultaneously — and the instance handled it fine, because of accumulated credits. Cost us about $25/month. A permanent m6i.large would’ve been $100/month for the same throughput. The t3 won the math, and it wasn’t close.

Real use case: Staging environments, internal tools, low-traffic web apps, scheduled batch jobs that run 30 minutes daily.

Warning: If your CPU stays above 40 percent consistently, you will exhaust credits and hit throttling. t3 is not a cheaper version of m6i for always-on workloads. It’s a completely different tool.

m6i — The General Purpose Workhorse

This is the safe default. m6i instances balance compute, memory, and network in a 1:4 ratio — one vCPU to four GB RAM. Web backends, application servers, small databases, anything without a clear CPU-heavy or memory-heavy signature. That’s what makes m6i endearing to us small-team engineers who just need something predictable.

Most small teams should start here and move away only when actual data says otherwise. An m6i.large costs roughly $0.096/hour — about $70/month on-demand in us-east-1. It handles a Flask app with 50 concurrent users without flinching. It handles a WordPress site with 100K monthly visitors. No drama.

Real use case: Production web apps, microservices, API backends, most SaaS platforms under 10K users.

Warning: m6i is “good enough” for most things but optimal for nothing. Profile your app. If it’s CPU-bound while memory idles, you’re paying for RAM you’ll never use.

c6g — CPU-Intensive Work on Graviton Chips

c6g instances run AWS’s custom Graviton processor. Video encoding, data processing, scientific simulations, API servers fielding thousands of requests per second — these are the workloads c6g was built for. The vCPU-to-RAM ratio is 1:2, half the memory of m6i. You’re paying for compute, not storage.

The real win is cost. c6g instances run 15–20 percent cheaper than their x86 equivalents while delivering better single-threaded performance. A c6g.large runs $0.085/hour. Same workload on a c5.large? Also $0.085/hour — but worse performance. On m6i.large? $0.096/hour. The gap widens at larger sizes and the savings compound fast.

Real use case: High-throughput APIs, batch processing, real-time analytics pipelines, anything CPU-bound that can tolerate ARM architecture.

Warning: Not all software runs on ARM. Older libraries, some proprietary binaries — c6g will fail if your app has x86-only dependencies. Test before committing. Don’t make my mistake.

r6i — Memory-Heavy Workloads

The r family is for anything that lives in RAM. Redis, Elasticsearch, PostgreSQL — in-memory caches and real-time analytics engines. The vCPU-to-RAM ratio is 1:8, sometimes 1:16. You’re buying memory, full stop.

An r6i.xlarge gives you 4 vCPUs and 32 GB RAM for about $0.252/hour — roughly $184/month. Running a Redis cluster for session state or a small PostgreSQL instance? This is your category. Don’t try to save $30/month by forcing it into m6i. You’ll spend that $30 fixing cache misses and slow queries, and then some.

Real use case: Databases, cache layers, in-memory data stores, anything where the dataset is measured in tens of GB.

Warning: r6i is expensive. A lot of teams buy r6i when they just have a bad query plan. Make absolutely sure you actually need the memory before committing.

How to Match Your Workload to an Instance Type

Probably should have opened with this section, honestly.

Start by asking three questions in order:

- Is your app mostly idle with occasional traffic spikes? Yes → t3. No → next question.

- Is your workload CPU-bound or memory-bound? CPU-bound (encoding, heavy compute, high request-per-second processing) → c6g. Memory-bound (database, cache, large datasets in RAM) → r6i. Neither obvious → next question.

- Does cost matter more than simplicity? Yes, and your team can test Graviton → c6g or m6g. No, or you need x86 compatibility → m6i.

That’s the framework. Apply it to your actual traffic patterns — at least if you want to stop guessing.

Here’s a quick reference grid for the most common scenarios:

| Workload Pattern | Best Starting Instance | Estimated Monthly Cost (US-East-1) |

| Development environment, low-traffic web app | t3.small or t3.medium | $10–$25 |

| Production web app, API backend, 50–500 concurrent users | m6i.large | $70 |

| High-traffic API, CPU-intensive work | c6g.large or c6g.xlarge | $62–$123 |

| Single-server database or cache | r6i.large or r6i.xlarge | $122–$184 |

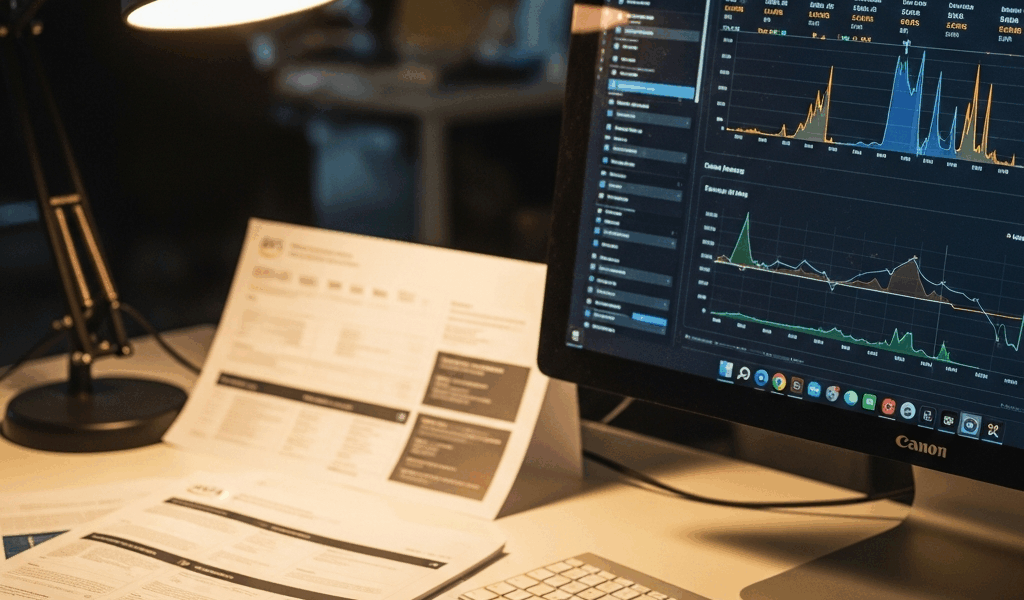

Start with the size that matches your workload type. Then use CloudWatch metrics to validate. CPU stays under 30 percent and memory under 50 percent after a week? Downsize. Either metric hits 80 percent? Upsize. Simple as that.

t3 Burstable Instances — When They Bite You

t3 instances accrue CPU credits at a predictable rate. A t3.small earns 12 credits/hour. A t3.medium earns 24 credits/hour. Each credit buys you one vCPU-second at full performance. Credits hit zero and your instance throttles to a baseline CPU rate — typically 5–10 percent. That’s it. That’s the trap.

The throttling is invisible until it suddenly isn’t. Your app slows down. Database queries timeout. Your monitoring shows nothing alarming, because CloudWatch doesn’t flag CPU credit exhaustion as an error. It’s a resource constraint, not a failure. The dashboard looks fine. Your users disagree.

The symptom: response times double or triple during peak hours, then normalize. You check CloudWatch CPU and it shows only 20 percent. Confusing, right? That’s credit exhaustion. I’m apparently wired to check CPU percentage first and CloudWatch CPU Credit Balance never, and that mental habit cost a client a very unpleasant Saturday morning. Don’t make my mistake.

Check for it by navigating to your EC2 instance in the AWS console, opening the Monitoring tab, and looking for the “CPU Credit Balance” metric. Trending toward zero? You’re about to hit a wall. Set a CloudWatch alarm to notify you when credits fall below 100 — at least if you’re running anything production-adjacent.

The fix is simple. Move to m6i. Unbounded CPU, predictable performance, and you can size down if the workload is lighter than you thought. t3 is for workloads you’ve already profiled and confirmed are genuinely bursty. Not for production systems running on assumption.

Graviton Instances — Should Your Team Switch

Graviton is AWS’s custom ARM processor, available across the c6g, m6g, and r6g families. The pitch: 15–20 percent cheaper, better performance per dollar, more efficient power consumption. That’s the brochure version.

The actual situation is more nuanced. Graviton is genuinely great — but it comes with compatibility caveats that matter more for some teams than others.

Python, Node.js, Go, Rust, Java with no native dependencies — Graviton works fine. Compiled binaries, old C libraries with no ARM builds, proprietary software — you’ll hit friction fast. We tested m6g for a client’s Go API last year and it worked flawlessly. Twenty percent cost savings for zero migration effort. Same client has a legacy .NET Framework app that won’t touch ARM with a ten-foot pole. That’s the reality.

While you won’t need a full migration plan, you will need a handful of hours to actually test it. Spin up a c6g.large, deploy your app, run load tests for a few hours, then decide. Half a day. Costs less than $5. Most teams find it works. Some find a blocker dependency. Better to know now than after you’ve migrated your production environment.

Honest take: Starting a new project with no vendor lock-in to x86? Default to Graviton. The cost savings compound at scale. Already running on m6i and everything works? Don’t migrate for a 20 percent savings unless you’re at the scale where it actually moves the needle on the monthly bill.

Stay in the loop

Get the latest team aws updates delivered to your inbox.