When These Two Even Overlap

The AWS Step Functions vs SQS debate has gotten complicated with all the conflicting takes flying around engineering Slack channels. As someone who’s watched teams pick the wrong one, spend three sprints regretting it, and then rebuild from scratch — I learned everything there is to know about why this decision goes sideways. Today, I will share it all with you.

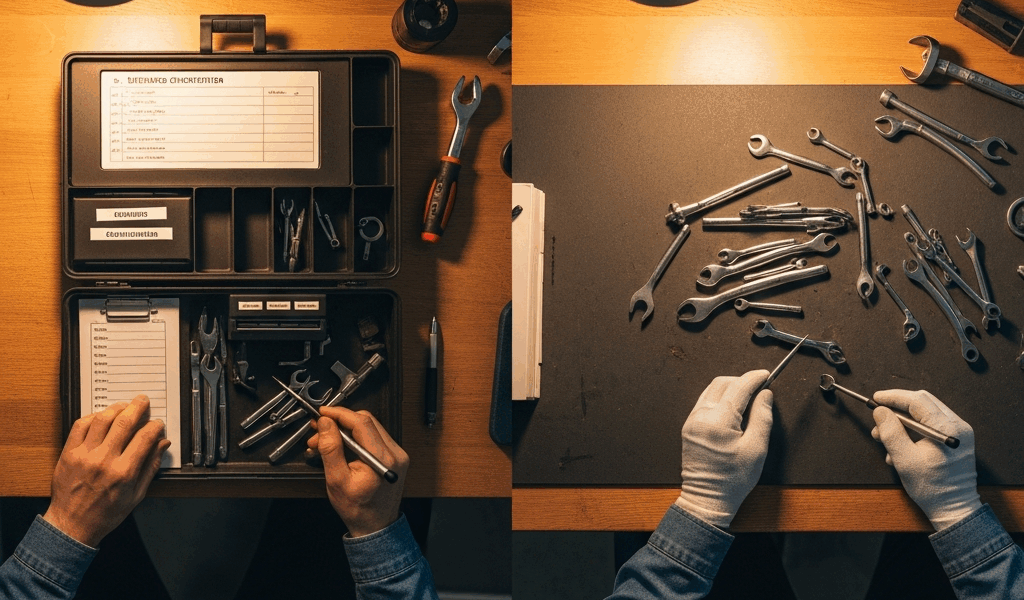

But what is the actual overlap here? In essence, it’s async task processing — retries, fan-out jobs hitting multiple services, multi-step background work running outside the request cycle. But it’s much more than that. SQS is a message queue. Step Functions is a workflow orchestrator. Genuinely different things. The confusion lives in that specific zone where both tools look like they could work, and that’s exactly where teams get stuck.

The decision isn’t academic. Pick wrong and you’re either hemorrhaging money — $0.025 per 1,000 state transitions on a job running 50,000 times a day adds up fast — or you’re debugging a failed five-step order workflow by digging through CloudWatch logs at 11pm. Neither is fun. This piece is about getting that choice right before you build.

How SQS Handles Task Queues in Practice

SQS works well when your task has one job to do. A message lands in the queue, a Lambda picks it up, does the work, message disappears. That’s the happy path. The Lambda + SQS trigger pattern is mature, well-documented, and genuinely easy to reason about — at least if you’re dealing with single-step work.

Retry behavior is built in but blunt. Lambda fails, SQS redelivers based on the visibility timeout. Default is 30 seconds — you set that value explicitly, and it matters. Configure a dead-letter queue to catch messages exceeding the maxReceiveCount threshold. I’ve seen teams forget that setting entirely and then wonder why messages were silently vanishing. Don’t make my mistake.

Here’s where SQS gets honest about its limits. No built-in state between steps. If your job needs Step A, then Step B, then Step C, you’re coordinating that yourself — chained queues, Lambda calling Lambda, some other pattern you’ll invent and eventually regret. No visual debugging either. When a job fails midway through a three-step process, you’re piecing it together from scattered log entries. Progress tracking on multi-step jobs is a fully custom-built problem.

For high-volume, single-step work though? SQS is clearly right. Image resizing is the canonical example. User uploads a photo, message goes to SQS, Lambda resizes it, done. Maybe 500,000 of these a day. At SQS pricing of $0.40 per million requests, that’s roughly $6 a month. Fast, cheap, zero orchestration overhead. That’s what makes SQS endearing to us infrastructure-minded engineers.

How Step Functions Handles the Same Problem

Step Functions asks you to define your workflow as a state machine. Forget the AWS definition for a second — what it actually feels like is drawing a flowchart that AWS executes on your behalf. Each box is a state. Each arrow is a transition. You define success paths, failure paths, and retry limits before anything ever runs.

The retry and catch behavior is genuinely first-class. Set MaxAttempts, set IntervalSeconds between retries, set a BackoffRate so you’re not hammering a struggling downstream service. Task fails beyond retries? You catch it and route it somewhere specific — not into a void. This isn’t bolted on as an afterthought. It’s structural.

Execution history in the console is where Step Functions earns real points. Every execution shows exactly which state succeeded, which failed, what the input was, what the output was, when each transition happened. ML pipeline failing at the data validation step? You know in 30 seconds instead of 30 minutes. Honestly, that alone has saved teams I know from genuinely bad incidents.

Parallel branching is native. Fan out to three independent services and wait for all of them to finish before moving on — that’s a Map or Parallel state, maybe 15 lines of JSON. Compare that to building the same coordination logic manually with SQS.

Now for the honest part. Step Functions Standard Workflows run $0.025 per 1,000 state transitions. Sounds cheap. At scale it isn’t. A workflow with 10 states running 100,000 times per day hits 1,000,000 transitions — $25 a day, $750 a month. Express Workflows are priced per execution duration instead, which helps for high-volume short jobs, but they cap execution history retention. Worth reading the fine print there.

The use case where Step Functions is obviously right: multi-step order processing. Place order → validate inventory → charge payment → trigger fulfillment → send confirmation. Five states, each with retry logic, everything visible in the console. Building that same thing with chained SQS queues is a multi-week project. Building it with Step Functions is an afternoon.

Side-by-Side on the Criteria That Actually Matter

Probably should have opened with this section, honestly. Here’s the comparison without the fluff.

Cost at Scale

SQS — $0.40 per million requests. Scales linearly, stays cheap even at serious volume. Verdict: SQS wins for high-frequency workloads.

Step Functions — $0.025 per 1,000 state transitions on Standard Workflows. Gets expensive fast with multi-state, high-volume pipelines. Express Workflows help but add their own complexity. Verdict: Cost-effective for low-to-medium frequency, multi-step jobs.

Retry and Error Handling

SQS — Visibility timeout plus DLQ. Works fine, but anything sophisticated means custom logic you’re writing and maintaining yourself. Verdict: Adequate for single-step. Painful for multi-step.

Step Functions — Native retry config with backoff, per-state catch blocks, explicit failure routing built directly into the state machine definition. Verdict: Step Functions wins — and it’s not close.

Visibility Into Failures

SQS — CloudWatch logs and DLQ inspection. You’ll find the failure eventually, with enough digging. Verdict: Workable but slow.

Step Functions — Console execution history with input and output captured at every single state transition. Verdict: Step Functions wins. Debugging time drops dramatically.

Developer Complexity

SQS — Low setup friction. Lambda trigger, DLQ config, done. Multi-step patterns require significant custom code that hides complexity rather than eliminating it. Verdict: Simple jobs stay simple. Complex jobs get quietly complicated.

Step Functions — State machine definitions have a learning curve. Amazon States Language is verbose — I’m apparently wired for JSON and ASL works for me while YAML-based config alternatives never quite clicked. Higher upfront investment. Verdict: Lower long-term maintenance cost on anything genuinely complex.

Latency

SQS — Near-instant Lambda trigger. Minimal overhead. Verdict: SQS wins for latency-sensitive tasks.

Step Functions — Standard Workflows carry latency per state transition. Express Workflows are measurably faster and better suited here. Verdict: Not the right choice if milliseconds matter at each step.

Which One to Pick for Your Workload

Use SQS if your tasks are single-step or close to it, your volume is high — hundreds of thousands per day or more — your failure handling needs are basic, and developer speed matters more than observability right now. Image processing, notification delivery, log ingestion, webhook fan-out. SQS was built for exactly this.

Use Step Functions if your workflow has three or more steps that depend on each other, you need parallel branches coordinated and resolved together, your failure modes need specific per-step handling, or you need a clean audit trail of what happened in any given execution. Order processing, ML pipeline coordination, multi-service data transformation — Step Functions handles this natively in ways SQS simply can’t.

Use both together if you have high-volume ingestion feeding into a complex multi-step process. The hybrid pattern works well here. SQS absorbs the incoming burst — it’s genuinely good at this — then a Lambda triggered by SQS starts a Step Functions execution for each message. You get SQS buffering and decoupling at the intake layer, plus Step Functions orchestration and visibility during the actual processing. This pattern shows up constantly in batch processing systems and async API backends that need durability on the way in and coordination on the way through.

So, without further ado, here’s the single-sentence verdict: if your task has more than two steps or you’ll spend real time debugging failures, use Step Functions — for everything else, SQS is faster to ship and cheaper to run.

Stay in the loop

Get the latest team aws updates delivered to your inbox.